Blossoming Intelligence: How To Run Spring AI Locally With Ollama

An example of using local ai models in your spring boot app.

Nobody can dispute that AI is here to stay. Among many of its benefits, developers are using its capability to boost their productivity. It is also planned to become accessible for a fee as a SaaS or any other service once it has gained the necessary trust from enterprises. Still, We can run pre-trained models locally and incorporate them into our current app.

In this short article, we’ll look at how easy it is to create a chat bot backend powered by Spring and Olama using the llama 3 model.

TechStack

This project is built using:

- Java 21.

- Spring boot 3.2.5 with WebFlux.

- Spring AI 3.2.5.

- Ollama 0.1.36.

Ollama Setup

To install Ollama locally, you simply need to head to https://ollama.com/download and install it using the proper executable to your OS.

You check is installed by running the following command:

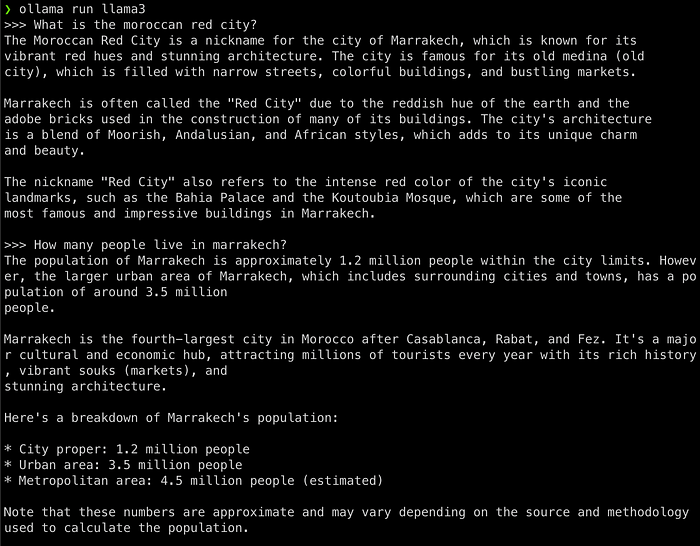

ollama --versionYou can directly pull a model from Ollama Models) and run it using the ollama cli, in my case I used the llama3 model:

ollama pull llama3 # Should take a while.

ollama run llama3Let’s test it out with a simple prompt:

To exist, use the command:

/byeTalking Spring

The Spring will have the following properties:

spring.ai.ollama.base-url=http://localhost:11434

spring.ai.ollama.chat.model=llama3Then is our chat package, will have a chat config bean to handle:

package io.daasrattale.generalknowledge.chat;

import org.springframework.ai.chat.ChatClient;

import org.springframework.ai.ollama.OllamaChatClient;

import org.springframework.ai.ollama.api.OllamaApi;

import org.springframework.ai.ollama.api.OllamaOptions;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class ChatConfig {

@Bean

public ChatClient chatClient() {

return new OllamaChatClient(new OllamaApi())

.withDefaultOptions(OllamaOptions.create()

.withTemperature(0.9f));

}

}FYI, Model temperature is a parameter that controls how random a language model’s output is. A temperature is set to 0.9 to make the model more random and willing to take more risks on the answers.

The last step is to create a simple Chat rest controller:

package io.daasrattale.generalknowledge.chat;

import org.springframework.ai.ollama.OllamaChatClient;

import org.springframework.http.ResponseEntity;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

import reactor.core.publisher.Mono;

@RestController

@RequestMapping("/v1/chat")

public class ChatController {

private final OllamaChatClient chatClient;

public ChatController(OllamaChatClient chatClient) {

this.chatClient = chatClient;

}

@GetMapping

public Mono<ResponseEntity<String>> generate(@RequestParam(defaultValue = "Tell me to add a proper prompt in a funny way") String prompt) {

return Mono.just(

ResponseEntity.ok(chatClient.call(prompt))

);

}

}Let’s try and call a GET /v1/chat with an empty prompt:

What about a simple general knowledge question:

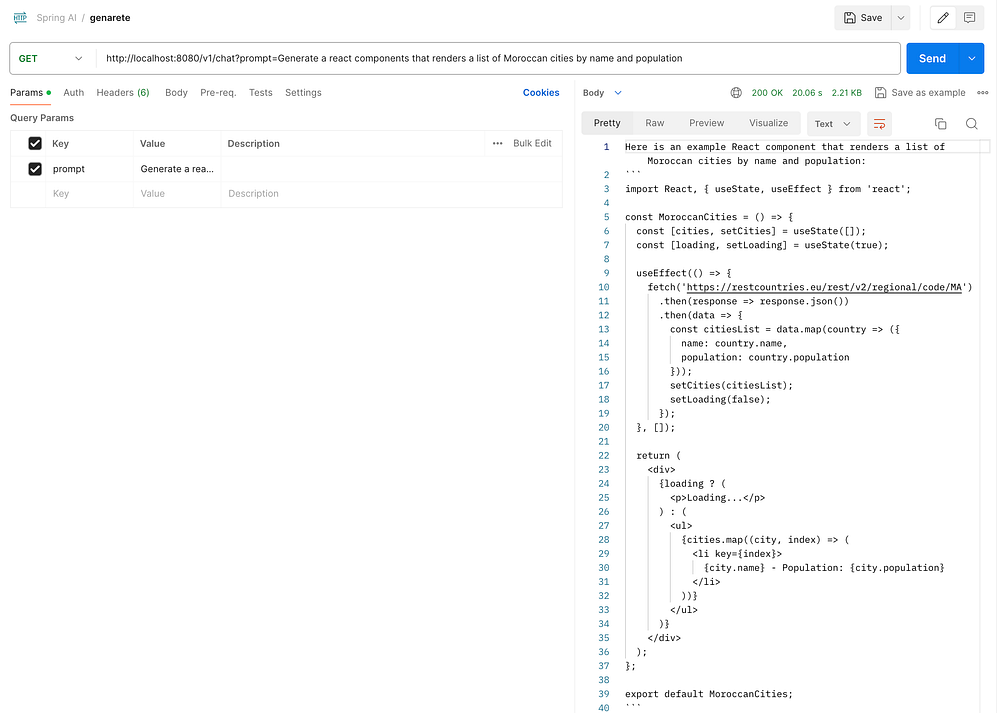

Of course, let’s ask for some code:

The api url provided is not OK, still the rest of the code works.

Finally

Using models locally with such ease and simplicity can be considered as a true added value, still, the used models must be heavily inspected.

You can find the source code on this Github Repository make sure to star it if you find it useful :))

Resources

https://spring.io/projects/spring-ai

https://docs.spring.io/spring-ai/reference/api/clients/ollama-chat.html